German public broadcaster ZDF recalled a US correspondent in late February after an AI-generated clip appeared in a report on US Immigration and Customs Enforcement (ICE) agents.

ADVERTISEMENT

ADVERTISEMENT

The broadcaster apologised for using the clip, which depicted children clinging to their mother as she is arrested by ICE agents. The video held a Sora watermark, a clear indication that it was created by OpenAI’s video tool.

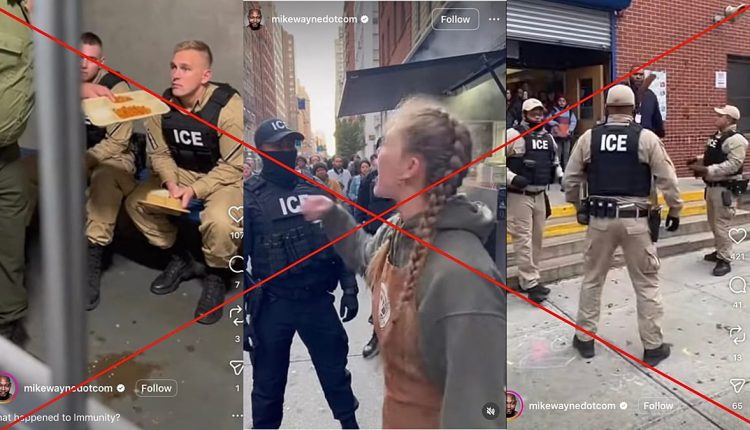

The Cube, Euronews’ fact-checking team, found that the video is one of thousands of AI-generated clips of ICE agents that are flooding social media, many without clear labelling and collectively amassing millions of views.

Consistent pattern

Data shared with The Cube by AI detection firm TruthScan shows more than 200 short clips portraying ICE agents being chased by teachers, fighting in bars and being arrested by the NYPD (New York City Police Department) in the subway.

TruthScan said it scraped more than 200 videos from a single Instagram account. The videos are short, dramatic and emotionally charged.

The Cube found that the account is still posting AI-generated clips of ICE en masse, with some gaining thousands, and others millions, of views.

There are various clues why the videos aren’t real: in one example, steam appears to rise through a solid surface during a confrontation between an ICE agent and a café worker — a subtle sign the clip is synthetic.

According to TruthScan, each video was either exactly 10 or 15 seconds long, strongly suggesting they were generated using OpenAI’s Sora 2 video model, which offers 10 or 15-second video options to users.

Unlike many AI-generated videos that contain a watermark, the majority of these ICE clips do not.

Similarly, in November last year, 404 Media reported that AI-generated clips of ICE agents originating from a Facebook account went viral, with one generating 4 million views alone. The videos have since been removed.

Wider risk to public trust

Experts say the risk extends beyond individual clips being labelled incorrectly to the broader proliferation of fake content on sensitive political issues, which can undermine public trust.

“There is significant risk to collective responses to the actions of law enforcement, both negative and positive,” Ari Abelson, co-founder of OpenOrigins, a media authenticity company that detects deepfakes.

“It’s a concern for law enforcement agencies who want to maintain their trust, but it’s also important to institutions that hold them accountable when they overstep,” Abelson told The Cube.

As with ZDF’s broadcast, AI-generated ICE content is not confined to anonymous accounts. Sometimes it even reaches the highest echelons of public office.

In January, the White House posted a digitally-altered image of activist and lawyer Nekima Levy Armstrong following her arrest at an ICE-related protest in Minnesota.

An analysis shows the altered image depicts Armstrong as darker-skinned and visibly sobbing compared to the original image.

The White House responded to questions of the image’s manipulation with a post from Deputy Communications Director Kaelan Dorr, which read, “YET AGAIN to the people who feel the need to reflexively defend perpetrators of heinous crimes in our country I share with you this message: Enforcement of the law will continue. The memes will continue.”

Armston said that she was “disgusted” with the White House for posting the image.

According to Abelson, the concern arises if there is a lack of guardrails — or safeguards — to clearly indicate that the content is fake.

“Political cartoons have always been used in politics, and these are being used in very similar ways,” he said. “The key difference is how hyper-realistic the videos are becoming.”

“Social and information spaces require one thing: the ability to prove a photo and video is real, so we can enjoy the fake ones as fictions, and feel the gravity of real-life events,” he added.

Read the full article here